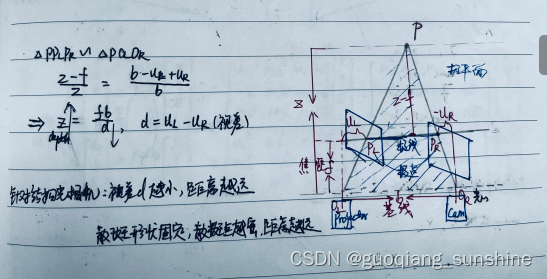

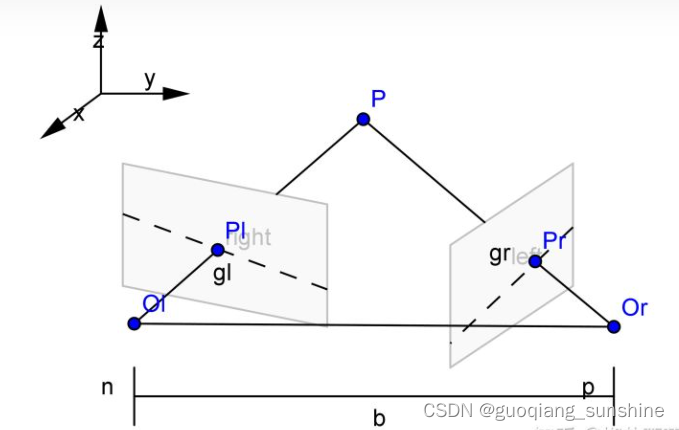

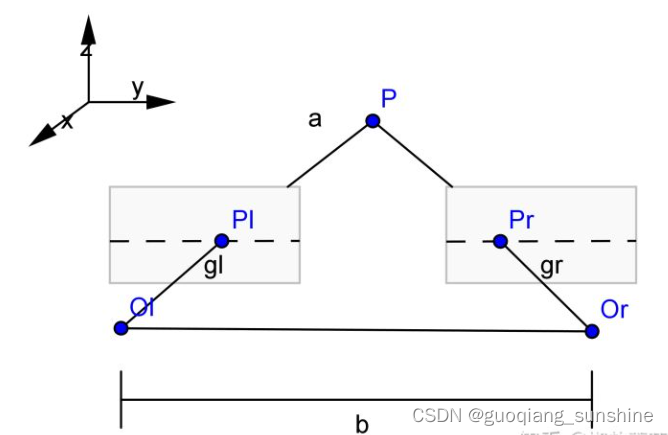

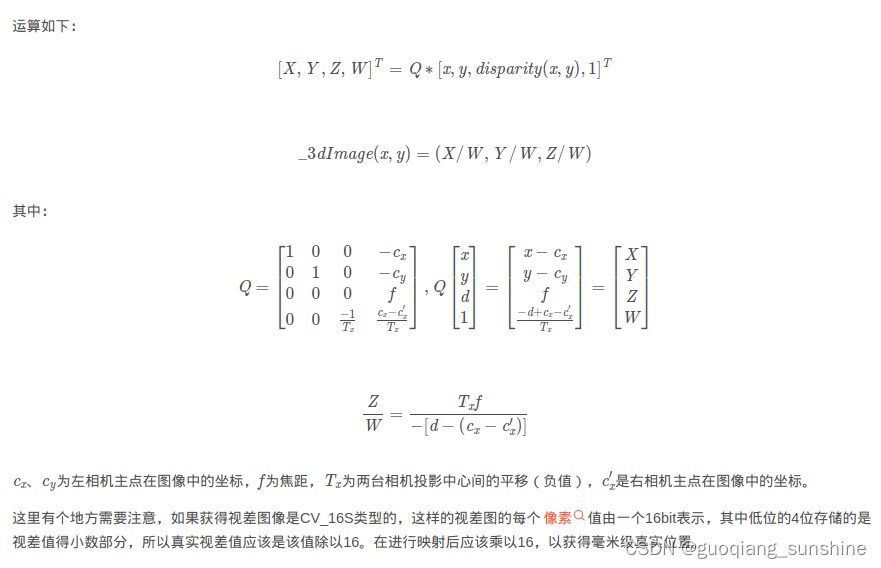

1. 数学模型

2.整体流程

获取标定与图像数据==>stereoRectify==>initUndistortRectifyMap==>remap==>bg/sgbm恢复出视差图==>l利用数学模型求解深度图==》深度图相关的应用/点云为基础的应用。(2D==>3D的转换)

3. 接口解析

3.1

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/core/core.hpp>

#include <opencv2/opencv.hpp>

using namespace cv;

using namespace std;

int blockSize = 0, uniquenessRatio = 0, numDisparities = 0;

Ptr<StereoBM> bm = StereoBM::create(16, 9);

Mat xyz; //三维坐标

Mat Q;//深度视差映射矩阵

Rect left_valid_roi, right_valid_roi;//图像校正之后,会对图像进行裁剪,这里的validRoi就是指裁剪之后的区域

Mat left_rectified_gray, right_rectified_gray;//左右摄像头校准后图像

Mat img;

Mat left_img, right_img;//左右摄像头原始图像

Mat left_rectified_img, right_rectified_img;//左右摄像头校准后图像

Vec3f point3;

float d;

Point origin; //鼠标按下的起始点

Rect selection; //定义矩形选框

bool selectObject = false; //是否选择对象

int mindisparity = 0;

int ndisparities = 64;

int SADWindowSize = 5;

void stereo_BM_match(int, void*)

{

bm->setBlockSize(2 * blockSize + 5); //SAD窗口大小,5~21之间为宜

bm->setROI1(left_valid_roi);

bm->setROI2(right_valid_roi);

bm->setPreFilterCap(31);

bm->setMinDisparity(0); //最小视差,默认值为0, 可以是负值,int型

bm->setNumDisparities(numDisparities * 16 + 16);//视差窗口,即最大视差值与最小视差值之差,窗口大小必须是16的整数倍,int型

bm->setTextureThreshold(10);

bm->setUniquenessRatio(uniquenessRatio);//uniquenessRatio主要可以防止误匹配

bm->setSpeckleWindowSize(100);

bm->setSpeckleRange(32);

bm->setDisp12MaxDiff(-1);

Mat disp, disp8;

bm->compute(left_rectified_gray, right_rectified_gray, disp);//输入图像必须为灰度图

disp.convertTo(disp8, CV_8UC1, 255 / ((numDisparities * 16 + 16) * 16.));//计算出的视差是CV_16S格式

reprojectImageTo3D(disp, xyz, Q, true); //在实际求距离时,ReprojectTo3D出来的X / W, Y / W, Z / W都要乘以16(也就是W除以16),才能得到正确的三维坐标信息。

xyz = xyz * 16;

imshow("disparity_BM", disp8);

}

void stereo_SGBM_match(int, void*)

{

Mat disp;

//SGBM

cv::Ptr<cv::StereoSGBM> sgbm = cv::StereoSGBM::create(mindisparity, ndisparities, SADWindowSize);

int P1 = 8 * left_rectified_img.channels() * SADWindowSize * SADWindowSize;

int P2 = 10 * left_rectified_img.channels() * SADWindowSize * SADWindowSize;

sgbm->setP1(P1);

sgbm->setP2(P2);

sgbm->setPreFilterCap(15);

sgbm->setUniquenessRatio(10);

sgbm->setSpeckleRange(2);

sgbm->setSpeckleWindowSize(100);

sgbm->setDisp12MaxDiff(1);

//sgbm->setMode(cv::StereoSGBM::MODE_HH);

sgbm->compute(left_rectified_img, right_rectified_img, disp);

disp.convertTo(disp, CV_32F, 1.0 / 16); //除以16得到真实视差值

Mat disp8U = Mat(disp.rows, disp.cols, CV_8UC1); //显示

normalize(disp, disp8U, 0, 255, NORM_MINMAX, CV_8UC1);

reprojectImageTo3D(disp8U, xyz, Q, true); //在实际求距离时,ReprojectTo3D出来的X / W, Y / W, Z / W都要乘以16(也就是W除以16),才能得到正确的三维坐标信息。

xyz = xyz * 16;

namedWindow("disparity_SGBM", WINDOW_AUTOSIZE);

imshow("disparity_SGBM", disp8U);

}

/*void stereo_GC_match(int, void*)

{

CvStereoGCState* state = cvCreateStereoGCState(16, 2);

left_disp_ = cvCreateMat(left->height, left->width, CV_32F);

right_disp_ = cvCreateMat(right->height, right->width, CV_32F);

cvFindStereoCorrespondenceGC(left, right, left_disp_, right_disp_, state, 0);

cvReleaseStereoGCState(&state);

CvMat* disparity_left_visual = cvCreateMat(size.height, size.width, CV_8U);

cvConvertScale(disparity_left, disparity_left_visual, -16);

}

*/

/*****描述:鼠标操作回调*****/

static void onMouse(int event, int x, int y, int, void*)

{

if (selectObject)

{

selection.x = MIN(x, origin.x);

selection.y = MIN(y, origin.y);

selection.width = std::abs(x - origin.x);

selection.height = std::abs(y - origin.y);

}

switch (event)

{

case EVENT_LBUTTONDOWN: //鼠标左按钮按下的事件

origin = Point(x, y);

selection = Rect(x, y, 0, 0);

selectObject = true;

//cout << origin << "in world coordinate is: " << xyz.at<Vec3f>(origin) << endl;

point3 = xyz.at<Vec3f>(origin);

point3[0];

//cout << "point3[0]:" << point3[0] << "point3[1]:" << point3[1] << "point3[2]:" << point3[2]<<endl;

cout << "世界坐标:" << endl;

cout << "x: " << point3[0] << " y: " << point3[1] << " z: " << point3[2] << endl;

d = point3[0] * point3[0] + point3[1] * point3[1] + point3[2] * point3[2];

d = sqrt(d); //mm

// cout << "距离是:" << d << "mm" << endl;

d = d / 10.0; //cm

cout << "距离是:" << d << "cm" << endl;

// d = d/1000.0; //m

// cout << "距离是:" << d << "m" << endl;

break;

case EVENT_LBUTTONUP: //鼠标左按钮释放的事件

selectObject = false;

if (selection.width > 0 && selection.height > 0)

break;

}

}

int main()

{

VideoCapture cap(1);

int FRAME_WIDTH = cap.get(CAP_PROP_FRAME_WIDTH);

int FRAME_HEIGHT = cap.get(CAP_PROP_FRAME_HEIGHT);

Mat aligned_rectified_img(FRAME_HEIGHT, FRAME_WIDTH, CV_8UC3);//校准+对齐后的图像

cout << "Resolution:<" << cap.get(CAP_PROP_FRAME_WIDTH) << "," << cap.get(CAP_PROP_FRAME_HEIGHT) << ">\n";

namedWindow("camera", WINDOW_AUTOSIZE);

// namedWindow("left_img",CV_WINDOW_NORMAL);

// namedWindow("right_img",CV_WINDOW_NORMAL);

// namedWindow("left_rectified_img",CV_WINDOW_NORMAL);

// namedWindow("right_rectified_img",CV_WINDOW_NORMAL);

// namedWindow("rectified_img",CV_WINDOW_NORMAL);

namedWindow("aligned_rectified_img", WINDOW_AUTOSIZE);

//resizeWindow("camera", 1200, 600);

// resizeWindow("aligned_rectified_img", 1200, 600);

Mat left_cameraMatrix = Mat::eye(3, 3, CV_64F);//左相机内参矩阵

left_cameraMatrix.at<double>(0, 0) = 2.762165790037839e+02;//Fx

left_cameraMatrix.at<double>(0, 1) = 0;

left_cameraMatrix.at<double>(0, 2) = 1.765880468329375e+02;//Cx

left_cameraMatrix.at<double>(1, 1) = 2.762317738515432e+02;//Fy

left_cameraMatrix.at<double>(1, 2) = 1.272320444598781e+02;//Cy

Mat left_distCoeffs = Mat::zeros(5, 1, CV_64F);//畸变系数

left_distCoeffs.at<double>(0, 0) = 0.065542106666972;//k1

left_distCoeffs.at<double>(1, 0) = -0.099179821896270;//k2

left_distCoeffs.at<double>(2, 0) = 0;//p1

left_distCoeffs.at<double>(3, 0) = 0;//p2

left_distCoeffs.at<double>(4, 0) = 0;

Mat right_cameraMatrix = Mat::eye(3, 3, CV_64F);//右相机内参矩阵

right_cameraMatrix.at<double>(0, 0) = 2.734695143541476e+02;//Fx

right_cameraMatrix.at<double>(0, 1) = 0;

right_cameraMatrix.at<double>(0, 2) = 1.724017536155269e+02;//Cx

right_cameraMatrix.at<double>(1, 1) = 2.733548075965133e+02;//Fy

right_cameraMatrix.at<double>(1, 2) = 1.255695004672240e+02;//Cy

Mat right_distCoeffs = Mat::zeros(5, 1, CV_64F);//畸变系数

right_distCoeffs.at<double>(0, 0) = 0.067619149443979;//k1

right_distCoeffs.at<double>(1, 0) = -0.104286472771764;//k2

right_distCoeffs.at<double>(2, 0) = 0;//p1

right_distCoeffs.at<double>(3, 0) = 0;//p2

right_distCoeffs.at<double>(4, 0) = 0;

Mat rotation_matrix = Mat::zeros(3, 3, CV_64F);//旋转矩阵

rotation_matrix.at<double>(0, 0) = 0.999997933684708;

rotation_matrix.at<double>(0, 1) = 5.541735042905797e-04;

rotation_matrix.at<double>(0, 2) = -0.001955893157243;

rotation_matrix.at<double>(1, 0) = -5.560064711997943e-04;

rotation_matrix.at<double>(1, 1) = 0.999999406695233;

rotation_matrix.at<double>(1, 2) = -9.367315446314680e-04;

rotation_matrix.at<double>(2, 0) = 0.001955372884999;

rotation_matrix.at<double>(2, 1) = 9.378170983011573e-04;

rotation_matrix.at<double>(2, 2) = 0.999997648505221;

Mat rotation_vector;//旋转矩阵

Rodrigues(rotation_matrix, rotation_vector);//旋转矩阵转化为旋转向量,罗德里格斯变换

Mat translation_vector = Mat::zeros(3, 1, CV_64F);//平移向量

translation_vector.at<double>(0, 0) = -74.303646210221200;

translation_vector.at<double>(1, 0) = -0.208289299602746;

translation_vector.at<double>(2, 0) = -1.203122420388863;

Mat R1, R2;//左右相机的3x3矫正变换(旋转矩阵)

Mat P1, P2;//左右相机新的坐标系统(矫正过的)输出 3x4 的投影矩阵

// Mat Q;//深度视差映射矩阵

// Rect left_valid_roi, right_valid_roi;//图像校正之后,会对图像进行裁剪,这里的validRoi就是指裁剪之后的区域

Mat rmap[2][2];//映射表 必须为:CV_16SC2/CV_32FC1/CV_32FC2

Size imageSize;

imageSize = Size(FRAME_WIDTH >> 1, FRAME_HEIGHT);

/*

立体校正的时候需要两幅图像共面并且行对准 以使得立体匹配更加的可靠

使得两幅图像共面的方法就是把两个摄像头的图像投影到一个公共成像面上,这样每幅图像从本图像平面投影到公共图像平面都需要一个旋转矩阵R

stereoRectify 这个函数计算的就是从图像平面投影都公共成像平面的旋转矩阵R1,R2。 R1,R2即为左右相机平面行对准的校正旋转矩阵。

左相机经过R1旋转,右相机经过R2旋转之后,两幅图像就已经共面并且行对准了。

其中P1,P2为两个相机的投影矩阵,其作用是将3D点的坐标转换到图像的2D点的坐标:P*[X Y Z 1]' =[x y w]

Q矩阵为重投影矩阵,即矩阵Q可以把2维平面(图像平面)上的点投影到3维空间的点:Q*[x y d 1] = [X Y Z W]。其中d为左右两幅图像的视差

*/

//双目校准

stereoRectify(left_cameraMatrix, left_distCoeffs,//左摄像头内参和畸变系数

right_cameraMatrix, right_distCoeffs,//右摄像头内参和畸变系数

imageSize, rotation_vector, translation_vector,//图像大小,右摄像头相对于左摄像头旋转矩阵,平移向量

R1, R2, P1, P2, Q,//输出的参数

CALIB_ZERO_DISPARITY, -1, imageSize, &left_valid_roi, &right_valid_roi);

//Precompute maps for cv::remap().

//用来计算畸变映射,输出的X / Y坐标重映射参数,remap把求得的映射应用到图像上。

initUndistortRectifyMap(left_cameraMatrix, left_distCoeffs, R1, P1, imageSize, CV_16SC2, rmap[0][0], rmap[0][1]);

initUndistortRectifyMap(right_cameraMatrix, right_distCoeffs, R2, P2, imageSize, CV_16SC2, rmap[1][0], rmap[1][1]);

while (1)

{

cap >> img;

left_img = img(Rect(0, 0, FRAME_WIDTH >> 1, FRAME_HEIGHT));

right_img = img(Rect(FRAME_WIDTH >> 1, 0, FRAME_WIDTH >> 1, FRAME_HEIGHT));

//经过remap之后,左右相机的图像已经共面并且行对准了

remap(left_img, left_rectified_img, rmap[0][0], rmap[0][1], INTER_LINEAR);

remap(right_img, right_rectified_img, rmap[1][0], rmap[1][1], INTER_LINEAR);

//立体匹配生成深度图需要用到灰度图

cvtColor(left_rectified_img, left_rectified_gray, COLOR_BGR2GRAY);

cvtColor(right_rectified_img, right_rectified_gray, COLOR_BGR2GRAY);

//复制左相机校准图像到总图像上

for (int i = 0; i < left_rectified_img.rows; i++)

{

for (int j = 0; j < left_rectified_img.cols; j++)

{

aligned_rectified_img.at<Vec3b>(i, j)[0] = left_rectified_img.at<Vec3b>(i, j)[0];

aligned_rectified_img.at<Vec3b>(i, j)[1] = left_rectified_img.at<Vec3b>(i, j)[1];

aligned_rectified_img.at<Vec3b>(i, j)[2] = left_rectified_img.at<Vec3b>(i, j)[2];

}

}

//复制右相机校准图像到总图像上

for (int i = 0; i < right_rectified_img.rows; i++)

{

for (int j = 0; j < right_rectified_img.cols; j++)

{

aligned_rectified_img.at<Vec3b>(i, j + (FRAME_WIDTH >> 1))[0] = right_rectified_img.at<Vec3b>(i, j)[0];

aligned_rectified_img.at<Vec3b>(i, j + (FRAME_WIDTH >> 1))[1] = right_rectified_img.at<Vec3b>(i, j)[1];

aligned_rectified_img.at<Vec3b>(i, j + (FRAME_WIDTH >> 1))[2] = right_rectified_img.at<Vec3b>(i, j)[2];

}

}

//红色框出校正后的左右图像

rectangle(img, left_valid_roi, Scalar(0, 0, 255), 2, 8);

rectangle(img, Rect(right_valid_roi.x + (FRAME_WIDTH >> 1), right_valid_roi.y, right_valid_roi.width, right_valid_roi.height), Scalar(0, 0, 255), 2, 8);

//做绿色线增强对比

for (int i = 0; i < aligned_rectified_img.rows; i += 32)

{

line(aligned_rectified_img, Point(0, i), Point(aligned_rectified_img.cols, i), Scalar(0, 255, 0), 1, LINE_8);

}

imshow("camera", img);

imshow("left_img",left_img);

imshow("right_img",right_img);

imshow("left_rectified_img",left_rectified_img);

imshow("right_rectified_img",right_rectified_img);

imshow("aligned_rectified_img", aligned_rectified_img);

stereo_BM_match(0, 0);

stereo_SGBM_match(0, 0);

//创建SAD窗口 Trackbar

createTrackbar("BlockSize:\n", "disparity_BM", &blockSize, 8, stereo_BM_match);

// 创建视差唯一性百分比窗口 Trackbar

createTrackbar("UniquenessRatio:\n", "disparity_BM", &uniquenessRatio, 50, stereo_BM_match);

// 创建视差窗口 Trackbar

createTrackbar("NumDisparities:\n", "disparity_BM", &numDisparities, 16, stereo_BM_match);

createTrackbar("SADWindowSize:\n", "disparity_SGBM", &SADWindowSize, 30, stereo_SGBM_match);

setMouseCallback("disparity_SGBM", onMouse, 0);

setMouseCallback("disparity_BM", onMouse, 0);

int key = waitKey(30);

if (key == 27)//按下Esc退出

return 0;

}

}

#include <iostream>

#include <fstream>

#include <map>

#include <vector>

#include <cmath>

#include <opencv2/highgui.hpp>

#include <opencv2/calib3d.hpp>

#include <opencv2/imgproc.hpp>

using namespace std;

//****这里设置三种颜色来让程序运行的更醒目****

#define WHITE "\033[37m"

#define BOLDRED "\033[1m\033[31m"

#define BOLDGREEN "\033[1m\033[32m"

//****相机内参****

struct CAMERA_INTRINSIC_PARAMETERS

{

double cx,cy,fx,fy,baseline,scale;

};

struct FILE_FORM

{

cv::Mat left,right;//存放左右视图数据

string dispname,depthname,colorname;//存放三种输出数据的文件名

};

//****读取配置文件****

class ParameterReader

{

public:

ParameterReader(string filename = "./parameters.txt")//配置文件目录

{

ifstream fin(filename.c_str());

if(!fin)

{

cerr<<BOLDRED"can't find parameters file!"<<endl;

return;

}

while(!fin.eof())

{

string str;

getline(fin,str);

if(str[0] == '#')//遇到’#‘视为注释

{

continue;

}

int pos = str.find("=");//遇到’=‘将其左右值分别赋给key和alue

if (pos == -1)

{

continue;

}

string key = str.substr(0,pos);

string value = str.substr(pos+1,str.length());

data[key] = value;

if (!fin.good())

{

break;

}

}

}

string getData(string key)//获取配置文件参数值

{

map<string,string>::iterator iter = data.find(key);

if (iter == data.end())

{

cerr<<BOLDRED"can't find:"<<key<<" parameters!"<<endl;

return string("NOT_FOUND");

}

return iter->second;

}

public:

map<string,string> data;

};

FILE_FORM readForm(int index,ParameterReader pd);//存入当前序列左右视图数据和三种输出结果文件名

void stereoSGBM(cv::Mat lpng,cv::Mat rpng,string filename,cv::Mat &disp);//SGBM方法获取视差图

void stereoBM(cv::Mat lpng,cv::Mat rpng,string filename,cv::Mat &disp);//BM方法获取视差图

void disp2Depth(cv::Mat disp,cv::Mat &depth,CAMERA_INTRINSIC_PARAMETERS camera);//由视察图计算深度图

int main(int argc, char const *argv[])

{

ParameterReader pd;//读取配置文件

CAMERA_INTRINSIC_PARAMETERS camera;//相机内参结构赋值

camera.fx = atof(pd.getData("camera.fx").c_str());

camera.fy = atof(pd.getData("camera.fy").c_str());

camera.cx = atof(pd.getData("camera.cx").c_str());

camera.cy = atof(pd.getData("camera.cy").c_str());

camera.baseline = atof(pd.getData("camera.baseline").c_str());

camera.scale = atof(pd.getData("camera.scale").c_str());

int startIndex = atoi(pd.getData("start_index").c_str());//起始序列

int endIndex = atoi(pd.getData("end_index").c_str());//截止序列

bool is_color = pd.getData("is_color") == string("yes");//判断是否要输出彩色深度图

cout<<BOLDRED"......START......"<<endl;

for (int currIndex = startIndex;currIndex < endIndex;currIndex++)//从起始序列循环至截止序列

{

cout<<BOLDGREEN"Reading file: "<<currIndex<<endl;

FILE_FORM form = readForm(currIndex,pd);//获取当前序列的左右视图以及输出结果文件名

cv::Mat disp,depth,color;

//****判断使用何种算法计算视差图****

if (pd.getData("algorithm") == string("SGBM"))

{

stereoSGBM(form.left,form.right,form.dispname,disp);

}

else if (pd.getData("algorithm") == string("BM"))

{

stereoBM(form.left,form.right,form.dispname,disp);

}

else

{

cout<<BOLDRED"Algorithm is wrong!"<<endl;

return 0;

}

disp2Depth(disp,depth,camera);//输出深度图

cv::imwrite(form.depthname,depth);

cout<<WHITE"Depth saved!"<<endl;

//****判断是否输出彩色深度图****

if (is_color)

{

cv::applyColorMap(depth,color,cv::COLORMAP_JET);//转彩色图

cv::imwrite(form.colorname,color);

cout<<WHITE"Color saved!"<<endl;

}

}

cout<<BOLDRED"......END......"<<endl;

return 0;

}

FILE_FORM readForm(int index,ParameterReader pd)

{

FILE_FORM f;

string lpngDir = pd.getData("left_dir");//获取左视图输入目录名

string rpngDir = pd.getData("right_dir");//获取右视图输入目录名

string dispDir = pd.getData("disp_dir");//获取视差图输出目录名

string depthDir = pd.getData("depth_dir");//获取深度图输出目录名

string colorDir = pd.getData("color_dir");//获取彩色深度图输出目录名

string rgbExt = pd.getData("rgb_extension");//获取图片数据格式后缀名

//输出当前文件序号(使用的TUM数据集,其双目视图命名从000000至004540,详情参看博文末尾ps)

string numzero;

if ( index >= 0 && index <= 9 )

{

numzero = "00000";

}

else if ( index >= 10 && index <= 99 )

{

numzero = "0000";

}

else if ( index >= 100 && index <= 999 )

{

numzero = "000";

}

else if ( index >= 1000 && index <= 9999 )

{

numzero = "00";

}

else if ( index >= 10000 && index <= 99999 )

{

numzero = "0";

}

//获取左视图文件名

stringstream ss;

ss<<lpngDir<<numzero<<index<<rgbExt;

string filename;

ss>>filename;

f.left = cv::imread(filename,0);//这里要获取单通道数据

//获取右视图文件名

ss.clear();

filename.clear();

ss<<rpngDir<<numzero<<index<<rgbExt;

ss>>filename;

f.right = cv::imread(filename,0);//这里要获取单通道数据

//获取深度图输出文件名

ss.clear();

filename.clear();

ss<<depthDir<<index<<rgbExt;

ss>>filename;

f.depthname = filename;

//获取视差图输出文件名

ss.clear();

filename.clear();

ss<<dispDir<<index<<rgbExt;

ss>>filename;

f.dispname = filename;

//获取彩色深度图输出文件名

ss.clear();

filename.clear();

ss<<colorDir<<index<<rgbExt;

ss>>filename;

f.colorname = filename;

return f;

}

void stereoSGBM(cv::Mat lpng,cv::Mat rpng,string filename,cv::Mat &disp)

{

disp.create(lpng.rows,lpng.cols,CV_16S);

cv::Mat disp1 = cv::Mat(lpng.rows,lpng.cols,CV_8UC1);

cv::Size imgSize = lpng.size();

cv::Ptr<cv::StereoSGBM> sgbm = cv::StereoSGBM::create();

int nmDisparities = ((imgSize.width / 8) + 15) & -16;//视差搜索范围

int pngChannels = lpng.channels();//获取左视图通道数

int winSize = 5;

sgbm->setPreFilterCap(31);//预处理滤波器截断值

sgbm->setBlockSize(winSize);//SAD窗口大小

sgbm->setP1(8*pngChannels*winSize*winSize);//控制视差平滑度第一参数

sgbm->setP2(32*pngChannels*winSize*winSize);//控制视差平滑度第二参数

sgbm->setMinDisparity(0);//最小视差

sgbm->setNumDisparities(nmDisparities);//视差搜索范围

sgbm->setUniquenessRatio(5);//视差唯一性百分比

sgbm->setSpeckleWindowSize(100);//检查视差连通区域变化度的窗口大小

sgbm->setSpeckleRange(32);//视差变化阈值

sgbm->setDisp12MaxDiff(1);//左右视差图最大容许差异

sgbm->setMode(cv::StereoSGBM::MODE_SGBM);//采用全尺寸双通道动态编程算法

sgbm->compute(lpng,rpng,disp);

disp.convertTo(disp1,CV_8U,255 / (nmDisparities*16.));//转8位

cv::imwrite(filename,disp1);

cout<<WHITE"Disp saved!"<<endl;

}

void stereoBM(cv::Mat lpng,cv::Mat rpng,string filename,cv::Mat &disp)

{

disp.create(lpng.rows,lpng.cols,CV_16S);

cv::Mat disp1 = cv::Mat(lpng.rows,lpng.cols,CV_8UC1);

cv::Size imgSize = lpng.size();

cv::Rect roi1,roi2;

cv::Ptr<cv::StereoBM> bm = cv::StereoBM::create(16,9);

int nmDisparities = ((imgSize.width / 8) + 15) & -16;//视差搜索范围

bm->setPreFilterType(CV_STEREO_BM_NORMALIZED_RESPONSE);//预处理滤波器类型

bm->setPreFilterSize(9);//预处理滤波器窗口大小

bm->setPreFilterCap(31);//预处理滤波器截断值

bm->setBlockSize(9);//SAD窗口大小

bm->setMinDisparity(0);//最小视差

bm->setNumDisparities(nmDisparities);//视差搜索范围

bm->setTextureThreshold(10);//低纹理区域的判断阈值

bm->setUniquenessRatio(5);//视差唯一性百分比

bm->setSpeckleWindowSize(100);//检查视差连通区域变化度窗口大小

bm->setSpeckleRange(32);//视差变化阈值

bm->setROI1(roi1);

bm->setROI2(roi2);

bm->setDisp12MaxDiff(1);//左右视差图最大容许差异

bm->compute(lpng,rpng,disp);

disp.convertTo(disp1,CV_8U,255 / (nmDisparities*16.));

cv::imwrite(filename,disp1);

cout<<WHITE"Disp saved!"<<endl;

}

void disp2Depth(cv::Mat disp,cv::Mat &depth,CAMERA_INTRINSIC_PARAMETERS camera)

{

depth.create(disp.rows,disp.cols,CV_8UC1);

cv::Mat depth1 = cv::Mat(disp.rows,disp.cols,CV_16S);

for (int i = 0;i < disp.rows;i++)

{

for (int j = 0;j < disp.cols;j++)

{

if (!disp.ptr<ushort>(i)[j])//防止除0中断

continue;

depth1.ptr<ushort>(i)[j] = camera.scale * camera.fx * camera.baseline / disp.ptr<ushort>(i)[j];

}

}

depth1.convertTo(depth,CV_8U,1./256);//转8位

}

3.2 stereoRectify

void stereoRectify( InputArray cameraMatrix1, InputArray distCoeffs1,

InputArray cameraMatrix2, InputArray distCoeffs2,

Size imageSize, InputArray R, InputArray T,

OutputArray R1, OutputArray R2,

OutputArray P1, OutputArray P2,

OutputArray Q, int flags = CALIB_ZERO_DISPARITY,

double alpha = -1, Size newImageSize = Size(),

CV_OUT Rect* validPixROI1 = 0, CV_OUT Rect* validPixROI2 = 0 );

参数:

R,tvec: 是由相机1变换到相机2的变换矩阵,按照视觉这边的习惯,一般世界坐标系下的点变换到相机坐标系下的点

R1,R2:对两个相机进行旋转矫正的旋转变换矩阵,为世界系到两个相机的变换

P1,P2:两个矫正畸变后的相机投影矩阵,相对的坐标系还是原来的相机1所在坐标系

flags: 一般CALIB_ZERO_DISPARITY

alpha: -1或者不设置则使用自动剪切,0的时候没有黑边,1的时候保留所有原图像素,会有黑边

newImageSize: 默认与原图相同,所以会有剪切,该参数需要跟initUndistortRectifyMap相同,设置大一些会在大畸变的时候保留更多细节.

使用默认参数,即自动裁剪,保留原始图像大小,结果是图像自动缩放,无黑边。

使用alpha=1.0时,原图所有像素都被保留,即不裁剪,保留黑边,但是图像会缩放,结果中P矩阵中K明显进行了缩放。

alpha: 0.0 表示去除黑边后的最大四边形,1.0表示保留原图所有像素,即保留黑边

undistort()函数:

主要针对单张图像进行去畸变操作,使用默认参数的时候主要控制参数是利用newCameraMatrix来完成,而newCameraMatrix一般由getOptimalNewCameraMatrix()函数得到,getOptimalNewCameraMatrix()函数可以控制的参数有:

alpha: 0.0 表示去除黑边后的最大四边形,1.0表示保留原图所有像素,即保留黑边

newImageSize:不设置的话,默认与原图相等,设置了的话会在alpha作用之后对图像进行缩放

总的来说alpha和newImageSize是两个互不干扰的参数,alpha只管是否对图像进行裁剪,而newImageSize只负责把图像进行缩放,这二者都会对相机参数造成影响.

主要完成对双目图像进行调整,计算出用于立体校正的参数,即:给定两个相机的K、畸变参数、外参,计算出相应的R1,R2,P1,P2 矩阵. 作用如公式所示:

在initUndistortRectifyMap的时候,对归一化平面上的点坐标进行旋转的修正,效果如下图,P1,P2矩阵与给定的alpha和newImageSize参数有关,alpha只控制有无黑边(即有效像素)newImageSize控制图像缩放.

3.3 reprojectImageTo3D函数

该函数将视差图,通过投影矩阵Q,得到一副映射图,图像大小与视差图相同,且每个像素具有三个通道,分别存储了该像素位置在相机坐标系下的三维点坐标在x, y, z,三个轴上的值,即每个像素的在相机坐标系下的三维坐标。

void cv::reprojectImageTo3D(

InputArray disparity, //视差图像

OutputArray _3dImage, //映射后存储三维坐标的图像

InputArray Q, //重投影矩阵 通过stereoRectify得到

bool handleMissingValues = false, //计算得到的非正常值是否给值,如果为true则给值10000

int ddepth = -1 //输出类型 -1 即默认为CV_32FC3 还可以是 CV_16S, CV_32S, CV_32F

)

4. 参考资料

- https://stackoverflow.com/questions/22418846/reprojectimageto3d-in-opencv

- https://docs.opencv.org/3.4/d9/d0c/group__calib3d.html#ga617b1685d4059c6040827800e72ad2b6

- https://www.coder.work/article/114127

版权声明:本文内容由互联网用户自发贡献,该文观点与技术仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容, 请发送邮件至 dio@foxmail.com 举报,一经查实,本站将立刻删除。